In the lead up to the Paris tragedy, the Federal Bureau of Investigation warned about the dangers of ‘going dark’: data stored on e-mail, text messages, phone calls, live chat sessions, photos and videos being encrypted by terrorists leading to the loss of a previously exploited intelligence capability.

In the lead up to the Paris tragedy, the Federal Bureau of Investigation warned about the dangers of ‘going dark’: data stored on e-mail, text messages, phone calls, live chat sessions, photos and videos being encrypted by terrorists leading to the loss of a previously exploited intelligence capability.

US and French officials have told The New York Times that the Paris attackers may have used difficult-to-crack encryption technologies to organise the plot. Authorities haven’t yet disclosed whether such encryption technology was used by the perpetrators of the Paris attacks.

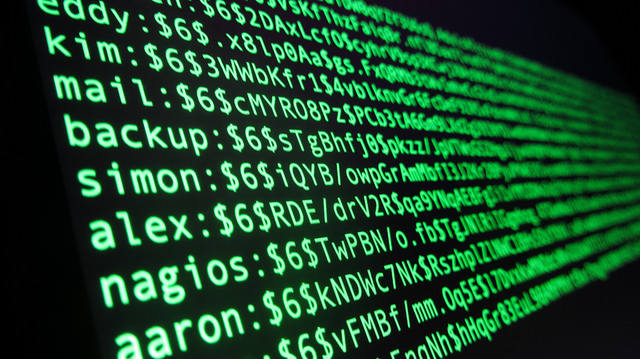

Law enforcement and security agencies have claimed that encrypted platforms built for commercial purposes to safeguard privacy—where only the sender and receiver hold the keys and devices to decipher the message—are a gift to terrorists and criminals to help them communicate in a way that puts them beyond the law’s reach.

Indonesian terrorism analyst Sidney Jones recently pointed out that ‘many of the committed ISIS supporters from Indonesia are using encrypted communications over WhatsApp and Telegram, not ordinary mobile phone communications that the Indonesian police can tap’.

The mobile messaging service Telegram has emerged as an important new promotional and recruitment platform for ISIL:

‘The main appeal of Telegram is that it allows users to send strongly encrypted messages, for free, to any number of a user’s phones, tablets or computers, which is useful to people who need to switch devices frequently. It has group messaging features that allow members to share large videos, voice messages or lots of links in one message, without detection by outsiders because this service does not run through the computing cloud’.

In January an ISIL follower provided would-be fighters with a list of what he determined were the safest encrypted communications systems. Soon after the list was published, ISIL started moving official communications from Twitter to Telegram.

Both in the US and in the UK there has been a push for technology companies to either ban encryption on the grounds that we’re paying too high a price in terms of security, or at the very least request that companies like Apple, Google and Facebook build ‘backdoors’ that allow law enforcement access into their encrypted tools.

Tech companies have resisted any push to hand over to law-enforcement the keys to their customers’ encrypted data. Privacy advocates have, post-Snowden, defended the technologies as a protection against government snooping.

But tech companies, many of which have been helpful in combating child pornography, are arguing that there are real risks of going along with government requests to access encrypted messages. Apple CEO Tim Cook, for example, recently warned that ‘any back door is a back door for everyone. Everybody wants to crack down on terrorists. Everybody wants to be secure. The question is how. Opening a back door can have very dire consequences.’

The Obama administration recently announced that it wouldn’t push for a law that would force computer and communications manufacturers to add backdoors to their products for law enforcement. They concluded that criminals, terrorists, hackers and foreign spies would use that backdoor as well.

Meanwhile, the NSW Crime Commission’s latest annual report states that ‘uncrackable’ phones have hindered at least two murder investigations in Sydney. NSW police this year travelled to BlackBerry’s headquarters in Canada in a bid to get advice on how to retrieve information from encrypted devices.

I can’t offer a technical solution to this issue. But as I’ve argued before, when it comes to privacy and security, we need to find the right balance in not allowing new encryption technologies to obstruct our counterterrorism efforts.

We need secure encrypted systems for commerce, government and the protection of personal information (our digital identity). Banks and telcos are using those products to safeguard our data. Deliberately weakening encryption solutions would arguably weaken key infrastructure, with the ultimate beneficiaries being criminals, cyber activists and rival nation states.

A cooperative solution with our police and security agencies working with relevant companies to identify the problems would be better than trying to introduce laws to restrict encryption.

Indeed, there’s no guarantee that by banning the use of encryption solutions, as recently proposed in the UK by David Cameron, can be effective. Users and companies might migrate to other countries, solutions, products or servers.

Intelligence agencies will want to be ‘data-holics’ and be able to read all communications—back to the ‘good ol’ days’—so that they can make a judgement if it’s important, rather than need to go to companies on a case-by-case basis.

Instead of handing over the ‘keys to crypto’ there may, however, need to be more effort by our law enforcement and security agencies to strengthen ‘old school’ human tradecraft.

Just as the blind may be able to compensate for their lack of sight with enhanced hearing or other abilities, our security agencies may also need to hone some of their old fashioned skills. Back to the future.