In September, Chile became the first state in the world to pass legislation regulating the use of neurotechnology. The ‘neuro-rights’ law aims to protect mental privacy, free will of thought and personal identity.

The move comes amid both growing excitement and growing concern about the potential applications of neurotechnology for everything from defence to health care to entertainment.

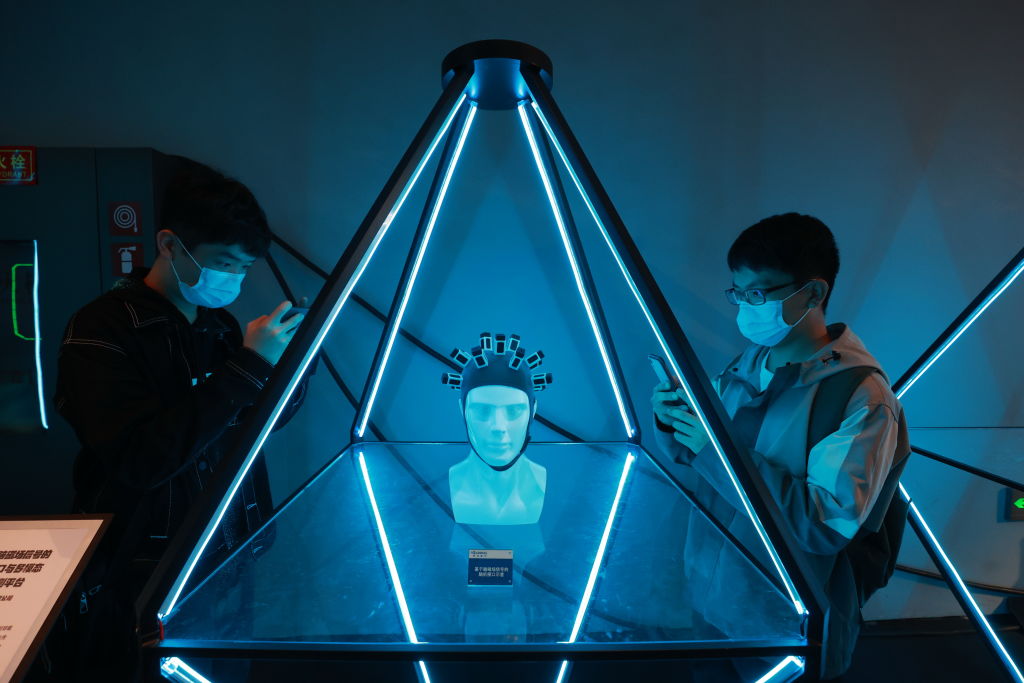

Neurotechnology is an umbrella term for a range of technologies which interact directly with the brain or nervous system. This can include systems which passively scan, map or interpret brain activity, or systems which actively influence the state of the brain or nervous system.

Governments and the private sector alike are pouring money into research on neurotechnology, in particular the viability and applications for brain–computer interfaces (BCI) which allow users to control computers with their thoughts. While the field is still in its infancy, it is advancing at a rapid pace, creating technologies which only a few years ago would have seemed like science fiction.

The implications of these technologies are profound. When fully realised, they have the potential to reshape the most fundamental and most personal element of human experience: our thoughts.

Technological development and design is never neutral. We encode values into every piece of technology we create. The immensely consequential nature of neurotechnology means it’s crucial for us to be thinking early and often about the way we’re constructing it, and the type of systems we do—and don’t—want to build.

A major driver behind research on neurotechnology by governments is its potential applications in defence and combat settings. Unsurprisingly, the United States and China are leading the pack in the race towards effective military neurotechnology.

The US’s Defense Advanced Research Projects Agency (DARPA) has poured many millions of dollars of funding into neurotechnology research over multiple decades. In 2018, DARPA announced a program called ‘next-generation nonsurgical neurotechnology’, or N3, to fund six separate, highly ambitious BCI research projects.

Individual branches of the US military are also developing their own neurotechnology projects. For example, the US Air Force is working on a BCI which will use neuromodulation to alter mood, reduce fatigue and enable more rapid learning.

In comparison to DARPA’s decades of interest in the brain, China’s focus on neurotechnology is relatively recent but advancing rapidly. In 2016, the Chinese government launched the China Brain Project, a 15-year scheme intended to bring China level with and eventually ahead of the US and EU in neuroscience research. In April, Tianjin University and state-owned giant China Electronics Corporation announced they are collaborating on the second generation of ‘Brain Talker’, a chip designed specifically for use in BCIs. Experts have described China’s efforts in this area as an example of civil–military fusion, in which technological advances serve multiple agendas.

Australia is also funding research into neurotechnology for military applications. For example, at the Army Robotics Expo in Brisbane in August, researchers from the University of Technology Sydney demonstrated a vehicle which could be remotely controlled via brainwaves. The project was developed with $1.2 million in funding through the Department of Defence.

Beyond governments, the private-sector neurotechnology industry is also picking up steam; 2021 is already a record year for funding of BCI projects. Estimates put the industry at US$10.7 billion globally in 2020, and it’s expected to reach US$26 billion by 2026.

In April, Elon Musk’s Neuralink demonstrated a monkey playing Pong using only brainwaves. Gaming company Valve is teaming up with partners to develop a BCI for virtual-reality gaming. After receiving pushback on its controversial trials of neurotechnology on children in schools, BrainCo is now marketing a mood-altering headband.

In Australia, university researchers have worked with biotech company Synchron to develop Stentrode, a BCI which can be implanted in the jugular and allows patients with limb paralysis to use digital devices. It is now undergoing clinical human trials in Australia and the US.

The combination of big money, big promises and, potentially, big consequences should have us all paying attention. The potential benefits from neurotechnology are immense, but they are matched by enormous ethical, legal, social, economic and security concerns.

In 2020 researchers conducted a meta-review of the academic literature on the ethics of BCIs. They identified eight specific ethical concerns: user safety; humanity and personhood; autonomy; stigma and normality; privacy and security (including cybersecurity and the risk of hacking); research ethics and informed consent; responsibility and regulation; and justice. Of these, autonomy and responsibility and regulation received the most attention in the existing literature. In addition, the researchers argued that the potential psychological impacts of BCIs on users needs to be considered.

While Chile is the first and so far only country to legislate on neurotechnology, groups such as the OECD are looking seriously at the issue. In 2019 the OECD Council adopted a recommendation on responsible innovation in neurotechnology which aimed to set the first international standard to drive ethical research and development of neurotechnology. Next month, the OECD and the Council of Europe will hold a roundtable of international experts to discuss whether neurotechnologies need new kinds of human rights.

In Australia, the interdisciplinary Australian Neuroethics Network has called for a nationally coordinated approach to the ethics of neurotechnology and has proposed a neuroethics framework.

These are the dawning days of neurotechnology. Many of the crucial breakthroughs to come may not yet be so much as a twinkle in a scientist’s eye. That makes now the ideal moment for all stakeholders—governments, regulators, industry and civil society—to be thinking deeply about the role neurotechnology should play in the future, and where the limits should be.